- Blog

- Belkin n600 wifi adapter driver download

- Raaz movie song pk

- Download suara cendet juara nasional

- Artificial academy 2 gameplay

- Kamen rider drive episode 32

- Wechat for web page

- Download lagu dangdut koplo nagih janji palapa

- Watch twilight online free 123movies

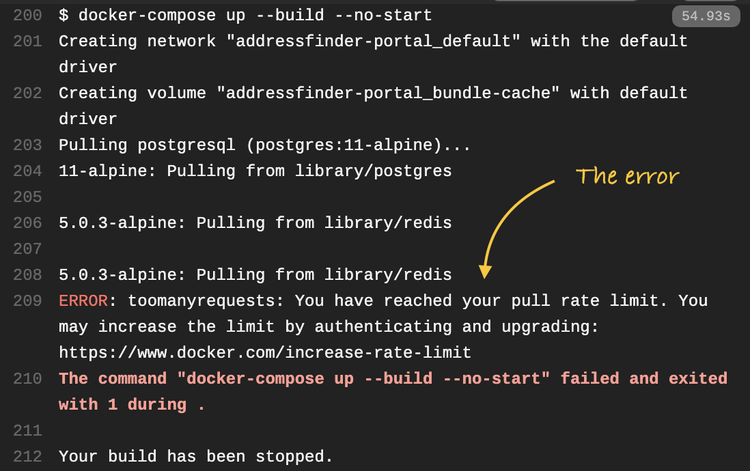

- Docker download rate limit

- Les miserables ebook download

- Mass effect andromeda mods ctd

- Latest adobe xd download

- Autocad free download autodesk

- Vocalign pro tools 10 crack

- Sony vegas free download full version for windows 10 32

Since the virtualization of the environment takes place at the operating-system (OS) level, the overall resource overhead is small, in particular compared to virtual machines that virtualize at the hardware level. We present several performance benchmarks making use of typical high-energy physics analysis workloads.Ī software container is a packaged unit of software that contains all dependencies required to run the software independently of the environment in which the container is executed. Each file required for the execution is fetched individually and subsequently cached on-demand using the CernVM file system (CVMFS), enabling the execution of very large software images on potentially thousands of Kubernetes nodes with very little overhead. In this paper, we describe a novel way of distributing software images on the Kubernetes platform, with which the container can start before the entire image contents become available locally (so-called “lazy pulling”).

#Docker download rate limit full#

Downloading the full image onto every single compute node on which the containers are executed becomes unpractical. However, as the research software used becomes increasingly complex, the software images grow easily to sizes of multiple gigabytes. This enables full reproducibility of the workloads and therefore also the associated scientific analyses performed.

These containers run largely isolated from the host system, ensuring that the development and execution environments are the same everywhere.

The past years have shown a revolution in the way scientific workloads are being executed thanks to the wide adoption of software containers. CERN, Experimental Physics Department, Geneva, Switzerland.Simone Mosciatti, Clemens Lange * and Jakob Blomer